Protest against police violence in Minneapolis, Minnesota. Photo by Lorie Shaull via Flickr (CC BY-SA 2.0)

This is the RADAR, Ranking Digital Rights’ newsletter. This edition was sent on July 16, 2020. Subscribe here to get The Radar by email.

Since our last newsletter, we’ve published our methodology for the 2020 RDR Index , which will evaluate two new companies — Amazon and Alibaba— and include new indicators on targeted advertising and algorithmic systems. Amid a global pandemic and mass protests against systemic racism, this might not sound like big news. But as issues like free speech, police surveillance, and contact-tracing technology dominate our daily conversations, we’re seeing how tech companies’ algorithms and ad-targeting systems are having real-life implications at this watershed moment in history.

Several companies in the RDR Index have made headlines to this effect in recent weeks. After years of pressure from groups like the Algorithmic Justice League, Microsoft vowed to stop selling facial recognition software until the U.S. has “a national law in place, grounded in human rights” to govern it. Amazon announced a one-year moratorium on police use of its Rekognition software, leaving plenty of room for improvement.

More than 500 companies — including Coca-Cola, Unilever, Target, and Verizon— have pulled their ads from Facebook in response to #StopHateforProfit, a coalition-led campaign calling on the company to stop the proliferation of white supremacist content, incitement to violence, and messages of voter suppression across its platform, and to build stronger mechanisms for ensuring accountability and transparency.

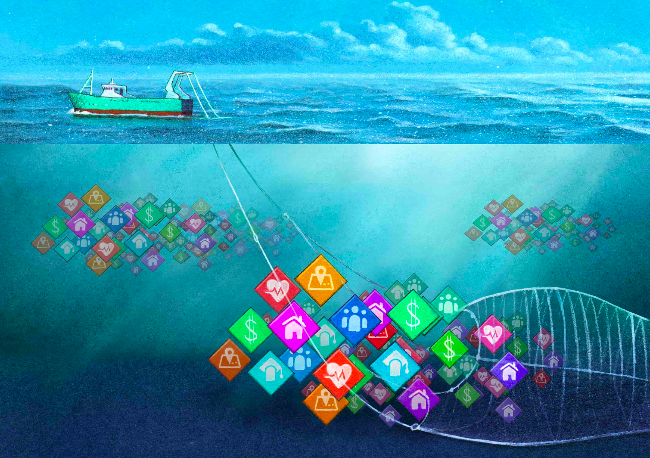

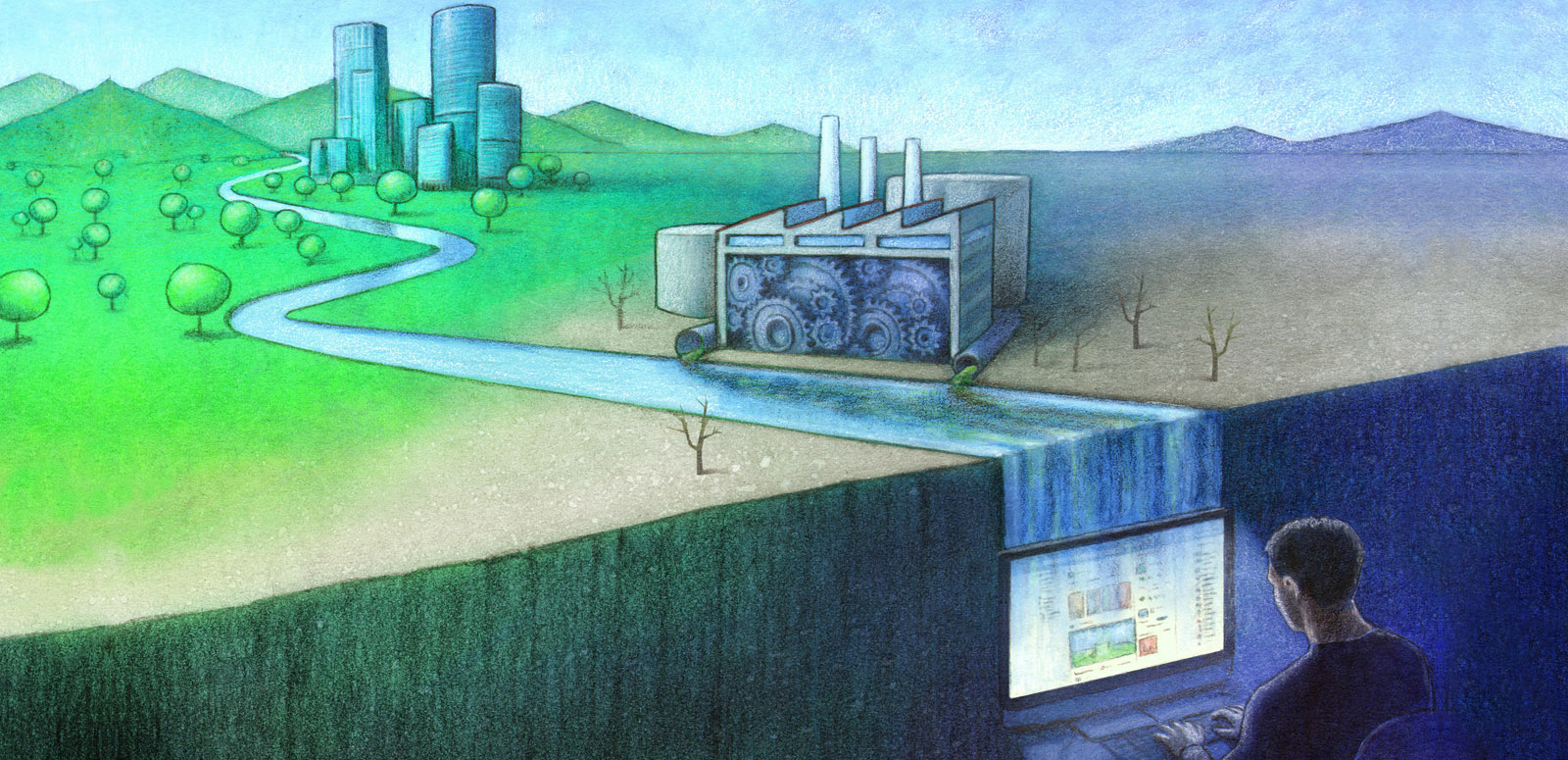

But it’s not just hateful or misleading content that’s the problem — it’s the business model. The underlying logic of #StopHateforProfit — that Facebook will continue to allow problematic content for as long as it is profitable — goes hand-in-hand with the key argument in It’s the Business Model, our spring 2020 series. In these two reports, we showed how targeted advertising and algorithms drive the amplification of misleading and hateful content (and skyrocketing profits) at Big Tech firms, often at the expense of democracy and human rights.

RDR Senior Policy Analyst Nathalie Maréchal spoke about our “It’s the Business Model” report series at a virtual panel discussion with the Open Technology Institute, Amnesty International, and the National Fair Housing Alliance. Watch the video here.

We are also worried about free speech in the Trump era. In the face of heavy public criticism over his handling of the pandemic and the protest movement, the U.S. president is increasingly turning to authoritarian-style threats and tactics. In an op-ed for CNN, RDR Director Rebecca MacKinnon compared Trump’s perennial attacks on legitimate media outlets and social media companies — including his recent Executive Order targeting Twitter — to those of China. “Authoritarian leaders like China’s Xi Jinping bend the law to serve their purposes and reinforce their power,” she wrote. “Trump is trying to do the same.”

And then there’s the shakeup at the US Agency for Global Media. In addition to firing several people in lead editorial roles, the new regime has posed an existential threat to the Open Technology Fund (OTF), a leading supporter of developers working on open-source, privacy-protecting technologies like Signal, the secure messaging app used by protesters from Hong Kong to Minneapolis. We don’t yet evaluate these tools, but we applaud the standards of transparency and openness that OTF has built into their processes.

As MacKinnon put it in a piece for Slate, “Their open research, computer code, and security training techniques are being used around the world by all sorts of people. They are helping everybody everywhere who dares to speak truth to power…”

More on the 2020 RDR Index

The forthcoming 2020 RDR Index (coming out in February 2021) will include new indicators that set global accountability and transparency standards for how companies can demonstrate respect for human rights online as they develop and deploy targeted advertising and algorithmic systems.

We also expanded the RDR Index to include Amazon and Alibaba, two of the world’s largest e-commerce companies. This means we’ll incorporate two new services — e-commerce platforms and “personal digital assistant ecosystems” — into the 2020 RDR Index methodology. Read all about our methodology revision process, or see the full roster of indicators for 2020.

Pings: RDR in the news

Hong Kongers recently went to the polls but faced technical challenges when the iOS PopVote app — a key tool for the citizen-led voting process — malfunctioned and Apple failed to respond to maintenance requests. While Google, Twitter, and Facebook have halted data-processing requests from authorities in light of the new national security law, Apple has yet to respond, adding to activists’ frustrations with the company. RDR Director Rebecca Mackinnon shared her perspective with Quartz: “It’s a situation in which the companies have to decide which bad options they want to go for. It’s hard to see how they can remain in Hong Kong and not be complicit.”

A new test feature on Twitter attempts to slow the flow of disinformation by suggesting users read an article before retweeting a link to it. Consumer Reports interviewed RDR Senior Policy Analyst Nathalie Maréchal about the development and rollout of the test: “I’m glad to see that Twitter is thinking about how to address some of the endemic problems on the platform,” she said. “But since they are, in effect, running live experiments on their users, it would be good to see more transparency about the process.”

Speaking with Slate about the #StopHateforProfit campaign, RDR Director Rebecca MacKinnon pointed out that ad revenue losses could also put investors at risk: “If I was a mutual fund with major holdings with Facebook, I would be [saying to them] ‘You have a serious problem here, with parts of the market not wanting to be associated with what you seem to represent now.’”

Internet shutdowns are defining India’s national psyche, according to Thought Jungle’s Toya Singh. She cited scholarly research by RDR Company Engagement Lead Jan Rydzak that examines the connection between network shutdowns and collective action responses in India.

Our research on human rights risks associated with algorithms and AI was cited multiple times in “Spotlight on Artificial Intelligence and Freedom of Expression,” a new report from the OSCE’s Representative on Freedom of the Media.

Ranking Digital Rights’ 2018 assessment of Telefónica was included in a GSMA deep dive report presenting human rights guidance for the mobile industry. Among companies ranked by RDR in 2018, Telefónica disclosed the most about its policies impacting freedom of speech and government requests to restrict content and services.

RDR staff and partners at RightsCon Tunis, 2019.

Where in the world is RDR?

RightsCon Online 2020: Our team will participate in multiple sessions at RightsCon Online, hosted by Access Now. These will include:

Real Corporate Accountability For Surveillance Capitalism: Setting The Civil Society Agenda For The 2020s

July 28 at 11:30am EDT

Speakers: RDR Senior Policy Analyst Nathalie Maréchal, Shoshana Zuboff (Harvard Business School), Joe Westby (Amnesty International), and Chris Gilliard (digital privacy scholar)

Digital Curfew in a Conflict Zone and its Impact on Gender Rights

July 31 at 5:45am EDT

Speakers: RDR’s Jan Rydzak, Tanzeel Khan (digital rights activist), Radhika Jhalani (Software Freedom Law Centre India), and Kris Ruijgrok (Open Technology Fund Fellow)

Is The Tech Greener On The Other Side? Benchmarking Tech Companies’ Environmental Sustainability

July 30 at 9:00am EDT

Speakers: RDR’s Nathalie Maréchal, Jan Rydzak and Zak Rogoff

How do we know we can trust you? Weighing the strength of platforms’ commitments to provide content moderation appeals

July 27 at 11:15am EDT

Speakers: RDR’s Zak Rogoff, Tomiwa Ilori (University of Pretoria), Spandana Singh (Open Technology Institute), Jeremy Malcolm (Prostasia Foundation), Kim Malfacini (Facebook)

(Un)free Speech: When Algorithms Decide

July 27 at 12:30pm EDT

Speakers: RDR’s Nathalie Maréchal, Camille François (Graphika), Natali Helberger (University of Amsterdam), Julia Haas (OSCE Office of the Representative on Freedom of the Media)

Public Knowledge: How Do We Move Beyond Consent Models in Privacy Legislation?

July 29, 1:30pm EDT

RDR’s Nathalie Maréchal will join Senator Sherrod Brown, Joseph Turow (University of Pennsylvania), Stephanie Nguyen (Consumer Reports), and Yosef Getachew (Common Cause) to discuss viable frameworks for federal privacy legislation.

The scan: What we’re reading

America the Unexceptional: In an essay for Foreign Policy, UN Special Rapporteur on Freedom of Expression David Kaye analyzes the U.S. government’s history of focusing on human rights abroad, while turning a blind eye to rights violations at home, particularly against Black Americans. Pointing to segregationists who fought to keep the U.S. from engaging in the international human rights system, Kaye writes: “racism and white supremacy drove the American refusal to enforce human rights at home, and that legacy of hypocrisy shapes human rights policy today.”

What does it mean to see privacy as a civil rights struggle? Writing for Salon, UC San Diego Chief Information Security Officer Michael Corn argues that “we’re losing the war against surveillance capitalism because we let Big Tech frame the debate.” For Corn, in a digital world, privacy is the spine of the body politic, forming a barrier between civil society and racial, political, and religious profiling, and shaping culture at large.

In The Data Delusion, Luminate Managing Director Martin Tisné looks ahead to legal implications and policy decisions invoked by machine learning. He writes: “Solutions lie in hard accountability, strong regulatory oversight of data-driven decision making, and the ability to audit and inspect the decisions and impacts of algorithms on society.”

Put us on your radar! Subscribe to The RADAR to receive our newsletter by email.