Posted at 06:04h

in

Featured,

News

by Afef Abrougui

This post is published as part of an editorial partnership between Global Voices and Ranking Digital Rights.

Raqqa, Syria in August 2017. Videos posted by media and rights groups of the war on Youtube started disappearing after the platform introduced a new AI targeting terrorist content. Image via Wikimedia Commons by Mahmoud Bali (VOA) [Public domain]

A new video on Orient News’ YouTube channel shows a scene that is all too familiar to its regular viewers. Staff at a surgical hospital in Syria’s Idlib province rush to operate on a man who has just been injured in an explosion. The camera pans downward and shows three bodies on the floor. One lies motionless. The other two are covered with blankets. A man bends over and peers under the blanket, perhaps to see if he knows the victim.

Syrian media outlet Orient News is one of several smaller media outlets that has played a critical role in documenting Syria’s civil war and putting video evidence of violence against civilians into the public eye. Active since 2008, the group is owned and operated by a vocal critic of the Assad regime.

Alongside their own distribution channels, YouTube has been an instrumental vehicle for bringing videos like this one to a wider audience. Or at least it was, until August 2017 when, without warning, Orient News’ YouTube channel was suspended.

After some inquiry by the group, alongside other small media outlets including Bellingcat, Middle East Eye and the Syrian Archive — all of whom also saw some of their videos disappear — it came to light that YouTube had taken down hundreds of videos that appeared to include “extremist” content.

But these groups were puzzled. They had been posting their videos, which typically include captions and contextual details, for years. Why were they suddenly seen as unsafe for YouTube’s massive user base?

Because there was a new kind of authority calling the shots.

Just before the mysterious removals, YouTube announced its deployment of artificial intelligence technology to identify and censor “graphic or extremist content,” in order to crack down on ISIS and similar groups that have used social media (including YouTube, Twitter and the now defunct Google Plus) to post gruesome footage of executions and to recruit fighters.

Thousands of videos documenting war crimes and human rights violations were swept up and censored in this AI-powered purge. After the groups questioned YouTube about the move, the company admitted that it made the “wrong call’’ on several videos, which were reinstated thereafter. Others remained under a ban, due to “violent and graphic content.”

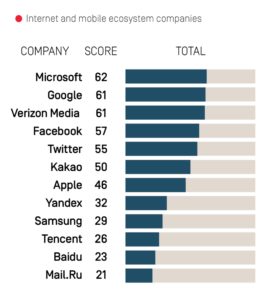

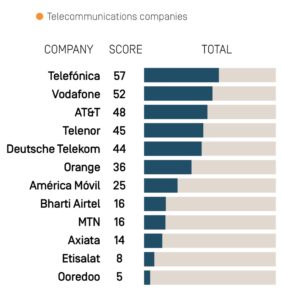

YouTube’s hasty removal of these videos highlights the problems of using automated tools to flag and remove materials — and why platforms need to be more transparent about their processes for policing content. Even when platforms like YouTube, Facebook, Instagram, and Twitter are clear about what types of content are banned, few provide clear information about what content they remove and why. This makes it difficult for users to understand why content has been removed and how to seek remedy when their rights are violated.

The myth of self-regulation

Companies like Google (parent of YouTube), Facebook and Twitter have legitimate reasons to take special measures when it comes to graphic violence and content associated with violent extremist groups — it can lead to situations of real-life harm and can be bad for business too. But the question of how they should identify and remove these kinds of content — while preserving essential evidence of war crimes and violence — is far from answered.

The companies have developed their policies over the years to acknowledge that not all violent content is intended to promote or incite violence. While YouTube, like other platforms, does not allow most extremist or violent content, it does allow users to publish such content in “a news, documentary, scientific, or artistic context,” encouraging them to provide contextual information about the video.

But, the policy cautions: “In some cases, content may be so violent or shocking that no amount of context will allow that content to remain on our platforms.” YouTube offers no public information describing how internal mechanisms determine which videos are “so violent or shocking.”

This approach puts the company into a precarious position. It is assessing content intended for public consumption, yet it has no mechanisms for ensuring public transparency or accountability about those assessments. The company is making its own rules and changing them at will, to serve its own best interests.

EU proposal could make AI solutions mandatory

A committee in the European Commission is threatening to intervene in this scenario, with a draft regulation that would force companies to step up their removal of “terrorist content” or face steep fines. While the proposed regulation would break the cycle of companies attempting and often failing to “self-regulate,” it could make things even worse for groups like Orient News.

Under the proposal, aimed at “preventing the dissemination of terrorist content online,” service providers are required to “take proactive measures to protect their services against the dissemination of terrorist content.” These include the use of automated tools to: “(a) effectively address the re-appearance of content which has previously been removed or to which access has been disabled because it is considered to be terrorist content; (b) detect, identify and expeditiously remove or disable access to terrorist content,” article 6(2) stipulates.

If adopted the proposal would also require “hosting service providers [to] remove terrorist content or disable access to it within one hour from receipt of the removal order.”

It further grants law enforcement or Europal the power to “send a referral” to hosting service providers for their “voluntary consideration.” The service provider will assess the referred content “against its own terms and conditions and decide whether to remove that content or to disable access to it.”

The draft regulation demands more aggressive deletion of terrorist content, and quick turnaround times on its removal. But it does not establish a special court or other judicial mechanism that can offer guidance to companies struggling to assess complex online content.

Instead, it would force hosting service providers to use automated tools to prevent the dissemination of “terrorist content” online. This would require companies to use the kind of system that YouTube has already put into place voluntarily.

The EU proposal puts a lot of faith in these tools, ignoring the fact that users, technical experts, and even legislators themselves remain largely in the dark about how these technologies work.

Can AI really assess the human rights value of a video?

Automated tools may be trained to assess whether a video is violent or graphic. But how do they determine the video’s intended purpose? How do they know if the person who posted the video was trying to document the human cost of conflict? Can these technologies really understand the context in which these incidents take place? And to what extent do human moderators play a role in these decisions?

We have almost no answers to these questions.

“We don’t have the most basic assurances of algorithmic accountability or transparency, such as accuracy, explainability, fairness, and auditability. Platforms use machine-learning algorithms that are proprietary and shielded from any review,” wrote WITNESS’ Dia Kayyali in a December 2018 blogpost.

The proposal’s critics argue that forcing all service providers to rely on automated tools in their efforts to crack down on terrorist and extremist content, without transparency and proper oversight, is a threat to freedom of expression and the open web.

The UN special rapporteurs on the promotion and protection of the right to freedom of opinion and expression; the right to privacy; and the promotion and protection of human rights and fundamental freedoms while countering terrorism have also expressed their concerns to the Commission. In a December 2018 memo, they wrote:

‘’Considering the volume of user content that many hosting service providers are confronted with, even the use of algorithms with a very high accuracy rate potentially results in hundreds of thousands of wrong decisions leading to screening that is over — or under — inclusive.’’

In recital 18, the proposal outlines measures that hosting service providers can take to prevent the dissemination of terror-related content, including the use of tools that would “prevent the re-upload of terrorist content.” Commonly known as upload filters, such tools have been a particular concern for European digital rights groups. The issue first arose during the EU’s push for a Copyright Directive, that would have required platforms to verify the ownership of a piece of content when it is uploaded by a user.

“We’re fearful of function creep,’’ Evelyn Austin from the Netherlands-based digital rights organization Bits of Freedom said at a public conference.

‘’We see as inevitable a situation in which there is a filter for copyrighted content, a filter for allegedly terrorist content, a filter for possibly sexually explicit content, one for suspected hate speech and so on, creating a digital information ecosystem in which everything we say, even everything we try to say, is monitored.’’

Austin pointed out that these mechanisms undercut previous strategies that relied more heavily on the use of due process.

‘’Upload filtering….will replace notice-and-action mechanisms, which are bound by the rule of law, by a process in which content is taken down based on a company’s terms of service. This will strip users of their rights to freedom of expression and redress…’’

The draft EU proposal also applies stiff financial penalties to companies that fail to comply. For a single company, this can amount to up to 4 percent of its global turnover from the previous business year.

French digital rights group La Quadrature du Net offered a firm critique of the proposal, and noted the limitations it would set for smaller websites and services:

‘’From a technical, economical and human perspective, only a handful of providers will be able to comply with these rigorous obligations – mostly the Web giants.

To escape heavy sanctions, the other actors (economic or not) will have no other choice but to close down their hosting services.’’

“Through these tools,” they warned, “these monopolistic companies will be in charge of judging what can be said on the Internet, on almost any service.”

Indeed, worse than encouraging “self-regulation,” the EU proposal will take us further away from a world in which due process or other publicly-bound mechanisms are used to decide what we say and see online, and push us closer to relying entirely on proprietary technologies to decide what kinds of content is appropriate for public consumption — with no mechanism for public oversight.